The Hidden Costs of Poor AI Training Data in Machine Learning

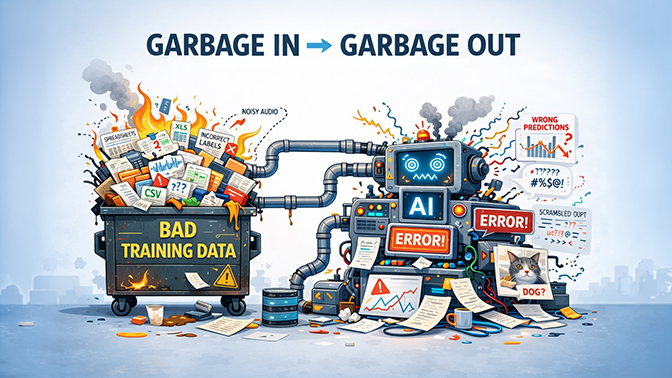

Poor training data can break AI systems, increase costs, and introduce bias. Learn the hidden costs of low-quality datasets, real-world AI failures, and how organizations can build better training data pipelines.

Artificial intelligence has advanced dramatically over the past decade. Modern machine learning models can recognise speech, detect diseases, identify faces, and generate human-like text.

Yet despite these technological breakthroughs, many AI systems still fail in production.

The most common reason is not the algorithm. It is the data.

Machine learning systems learn patterns from AI training datasets. The quality of AI training data directly determines how well a machine learning model performs. When those machine learning datasets are incomplete, biased, noisy, or incorrectly labelled, the models inherit those problems. As a result, even sophisticated AI systems can produce inaccurate predictions, unfair outcomes, or unreliable behaviour.

For organizations investing in AI, dataset quality has become one of the most critical factors determining whether a project succeeds or fails.

The Growing Cost of Poor Data Quality

Poor data quality carries significant financial consequences.

Gartner’s data quality research and guidance highlights the substantial business impact of poor data quality. IBM research estimates that poor data quality costs businesses in the United States over $3.1 trillion annually.

These losses occur in several ways:

| Impact | Description |

|---|---|

| Engineering cost | More time spent cleaning and fixing datasets |

| Infrastructure cost | Additional compute required for retraining |

| Operational delays | AI products delayed or redeployed |

| Reputational damage | AI failures reduce trust in automation |

In AI projects specifically, data problems can cascade through the entire machine learning pipeline. Once poor-quality data enters a system, its errors and biases propagate throughout downstream models and decisions.

The Core Problems in Training Data

1. Labelling Errors

Most supervised machine learning systems rely on labeled data.

Examples include:

- speech recordings paired with transcripts

- images paired with object labels

- documents tagged with entities or categories

If those labels are incorrect, the model learns incorrect patterns.

For example, transcription errors in speech datasets can cause models to associate the wrong sounds with the wrong words. In computer vision systems, incorrectly labeled images can confuse object detection models.

Even small labelling error rates can significantly degrade model performance.

As datasets scale into millions of samples, identifying and correcting these errors becomes increasingly difficult.

2. Demographic Bias

Another common problem is dataset imbalance.

If certain demographic groups are underrepresented in the training data, AI systems often perform worse for those groups.

This issue has been widely documented across AI applications, including facial recognition, healthcare diagnostics, and hiring algorithms.

One well-known study found dramatic differences in facial recognition accuracy across demographic groups. Research led by computer scientist Joy Buolamwini found that facial recognition systems had error rates as high as 34.7% for darker-skinned women compared to just 0.8% for lighter-skinned men.

The primary cause was dataset imbalance: most training images used in early facial recognition systems consisted primarily of lighter-skinned male faces.

3. Noisy or Low-Signal Inputs

Data quality issues also occur at the raw input level.

Examples include:

- speech recordings with heavy background noise

- low-resolution or poorly lit images

- incomplete sensor data in IoT systems

Low signal-to-noise data makes it harder for machine learning models to learn meaningful patterns from AI training data. When large amounts of noisy data are included in training sets, the model must effectively learn through the noise.

This leads to reduced accuracy and more unstable models.

4. Missing Metadata

Many datasets lack important contextual information.

Metadata is a critical component of high-quality machine learning datasets and well-structured AI training data.

Metadata such as the following can dramatically improve model performance:

- speaker accent or dialect

- geographic region

- recording environment

- device type

- demographic attributes

Without metadata, it becomes difficult to analyse where a model performs poorly or detect bias in predictions.

Metadata is especially important in multilingual and multicultural environments where language, context, and behaviour vary widely.

Real-World Case Studies of Dataset Failures

Facial Recognition Bias

Facial recognition systems have repeatedly demonstrated demographic bias due to unbalanced datasets.

Early commercial systems performed extremely well on lighter-skinned men but poorly on darker-skinned women because the training datasets contained far fewer examples of those faces.

These findings prompted widespread debate about fairness in AI and led several major technology companies to reevaluate their facial recognition systems.

Further reading:

Medical AI and Skin Tone

A similar issue has appeared in healthcare AI.

Researchers studying dermatology models found that many diagnostic algorithms performed significantly worse on darker skin tones because the datasets used for training contained mostly images of lighter skin.

When tested on more diverse datasets, some models experienced performance drops of 27–36%, revealing how dataset bias can affect real-world medical decisions (Daneshjou et al., Disparities in Dermatology AI Performance on a Diverse, Curated Clinical Image Set).

Recruitment Algorithms

Dataset bias has also affected hiring algorithms.

Several automated recruitment systems trained on historical hiring data inadvertently learned patterns that disadvantaged certain groups. Because the datasets reflected historical hiring biases, the algorithms reproduced those patterns.

These cases demonstrated how AI systems can unintentionally reinforce historical inequalities when trained on biased data.

Why This Matters Globally — and Especially in Emerging Markets

Poor dataset quality affects organizations everywhere.

However, its impact can be even more pronounced in regions where high-quality datasets are less readily available.

In many parts of the world, including Africa, Asia, and Latin America, linguistic diversity and environmental variation make data collection more complex.

For example:

- hundreds of regional accents may exist within a single language

- multilingual speakers frequently switch languages within a sentence

- recordings may occur in noisy environments such as markets or public transport

If AI systems are trained primarily on datasets collected elsewhere, they may struggle to perform reliably in these contexts.

Organisations building AI systems for global audiences must therefore prioritise representative, high-quality AI training data and well‑balanced machine learning datasets.

How Organisations Can Improve Dataset Quality

According to Gartner, poor data quality is a major enterprise risk and cost driver, while IBM estimates the broader economic impact of bad data in the United States alone exceeds $3 trillion annually. These findings highlight how AI training data quality has become a critical factor in successful machine learning systems.

Organisations building AI systems increasingly treat datasets as strategic infrastructure rather than temporary project assets.

Common best practices include:

| Practice | Description |

|---|---|

| Structured Data Collection | Collect data from diverse environments, speakers, and contexts to ensure broad representation. |

| Multi-Stage Annotation | Use multiple reviewers and quality control processes to reduce labeling errors. |

| Dataset Documentation | Maintain detailed documentation describing how datasets were collected and annotated. |

| Continuous Dataset Evaluation | Regularly audit datasets for bias, noise, and inconsistencies. |

How Way With Words Delivers High‑Quality AI Training Data

Organisations building speech and language AI systems face particularly complex dataset challenges.

High-quality audio datasets for AI training data require careful data collection, accurate transcription, and rigorous quality control. Organisations building machine learning systems often rely on specialised speech data collection services and professional transcription services to ensure reliable machine learning datasets.

Way With Words supports these needs through several processes designed to improve training dataset quality.

Professional Human Transcription

Speech datasets are transcribed by trained professionals rather than relying solely on automated tools. This reduces transcription errors and improves annotation accuracy.

Multi-Layer Quality Control

AI training datasets undergo multiple quality checks to detect:

- transcription errors

- labeling inconsistencies

- formatting issues

These review stages help ensure reliable training data.

Accent and Dialect Coverage

Speech data collection includes diverse accents and regional speech patterns, improving model performance across different user groups.

Noise Handling and Audio Quality Standards

Audio datasets are evaluated for signal-to-noise quality to ensure recordings are usable for machine learning models.

Structured Metadata

Each dataset can include metadata such as:

- accent or region

- recording environment

- speaker characteristics

This allows machine learning engineers to analyze model performance across different contexts.

Better Data Is the Foundation of Reliable AI

Artificial intelligence is often portrayed as a problem of model design or computational power.

In reality, the quality of the training data often matters far more.

Poor datasets introduce hidden costs through labeling errors, demographic bias, noisy recordings, and missing metadata. These problems can lead to inaccurate models, costly retraining cycles, and delayed product launches.

As AI systems continue to expand into critical areas such as healthcare, finance, and public infrastructure, dataset quality will only become more important.

Organisations that invest in high-quality, well-documented datasets gain a significant advantage: more reliable AI systems, faster development cycles, and greater trust from users.

In the long run, better data is the most reliable way to build better AI.

FAQ: AI Training Data Quality

Why is AI training data quality important?

AI models learn patterns from the datasets used during training. If AI training data contains errors, bias, or noise, machine learning models may produce unreliable or unfair results. High‑quality training datasets improve accuracy, reliability, and fairness in AI systems.

What happens when machine learning datasets are poor quality?

Poor machine learning datasets can lead to incorrect predictions, biased decision‑making, and expensive retraining cycles. In many AI projects, data quality issues are the primary reason models fail in production.

How can organisations improve AI dataset quality?

Organisations can improve AI dataset quality by implementing structured data collection, multi‑stage human annotation, strong quality‑control pipelines, and detailed dataset documentation.

What industries are most affected by poor AI training data?

Industries heavily dependent on machine learning models—such as healthcare, finance, speech recognition, autonomous systems, and recruitment technology—are particularly vulnerable to poor AI training datasets.